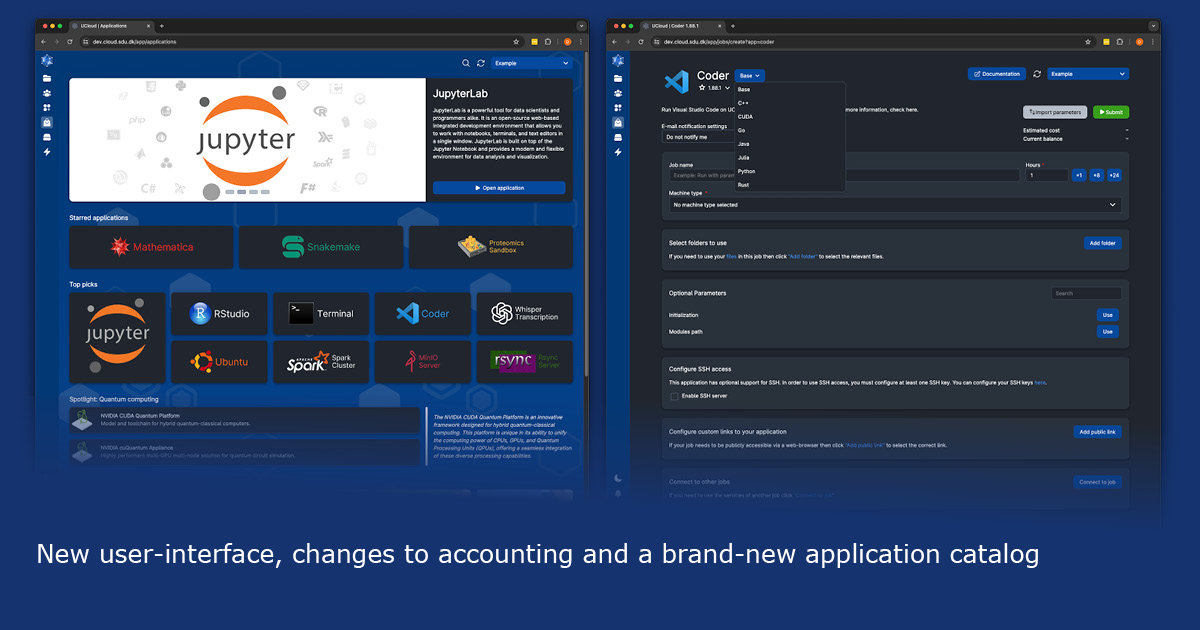

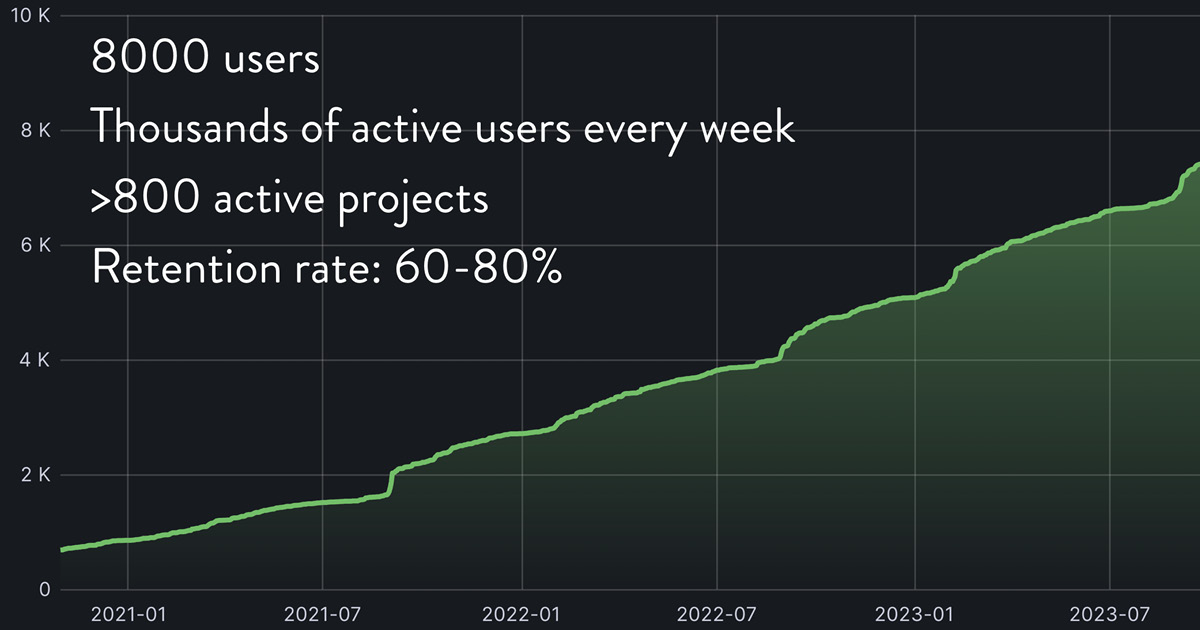

DeiC Interactive HPC is excited to roll out the new User Interface on the UCloud platform, designed to simplify processes and enhance the user experience. The updated interface, serving 10,000 users (and growing), signifies the dedication to delivering an easy-to-use interface that provides researchers with access to advanced interactive computing power, along with comprehensive data analysis and visualisation tools.

“The launch of this new user interface marks a significant overhaul. Our team has meticulously redesigned every aspect, from its overall look-and-feel to the functionality of each page. Our primary objective has been to create an enhanced platform for users. We’re excited to see how researchers will benefit from the improved efficiency and usability when engaging with the platform,” says Dan Sebastian Thrane, Special Consultant at the eScience Center, University of Southern Denmark (SDU), and leader of the cloud team, which has been responsible for the development and implementation of the UI update.

Key changes of the new user interface include:

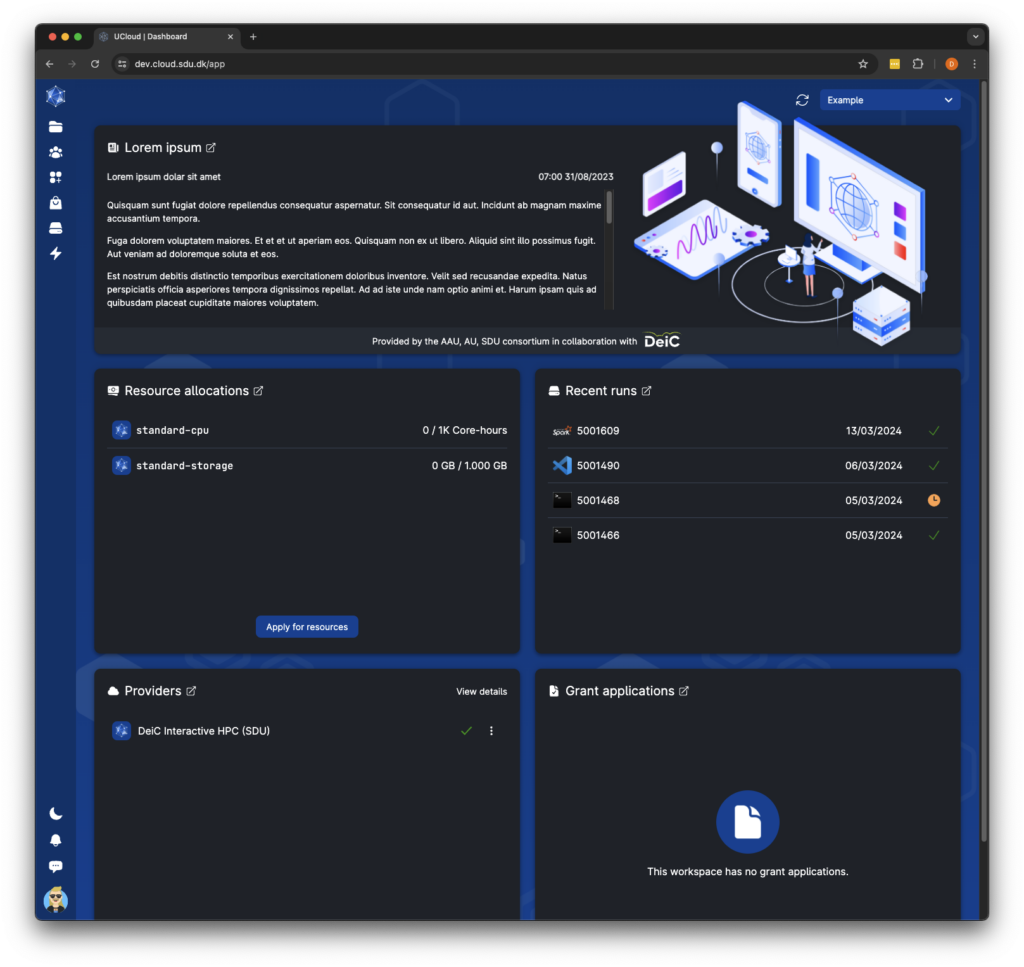

- Restructured dashboard layout to prioritize important information.

- Redesigned application catalogue with improved discoverability features.

- Improved space utilization with keyboard control, infinite scroll and better performance.

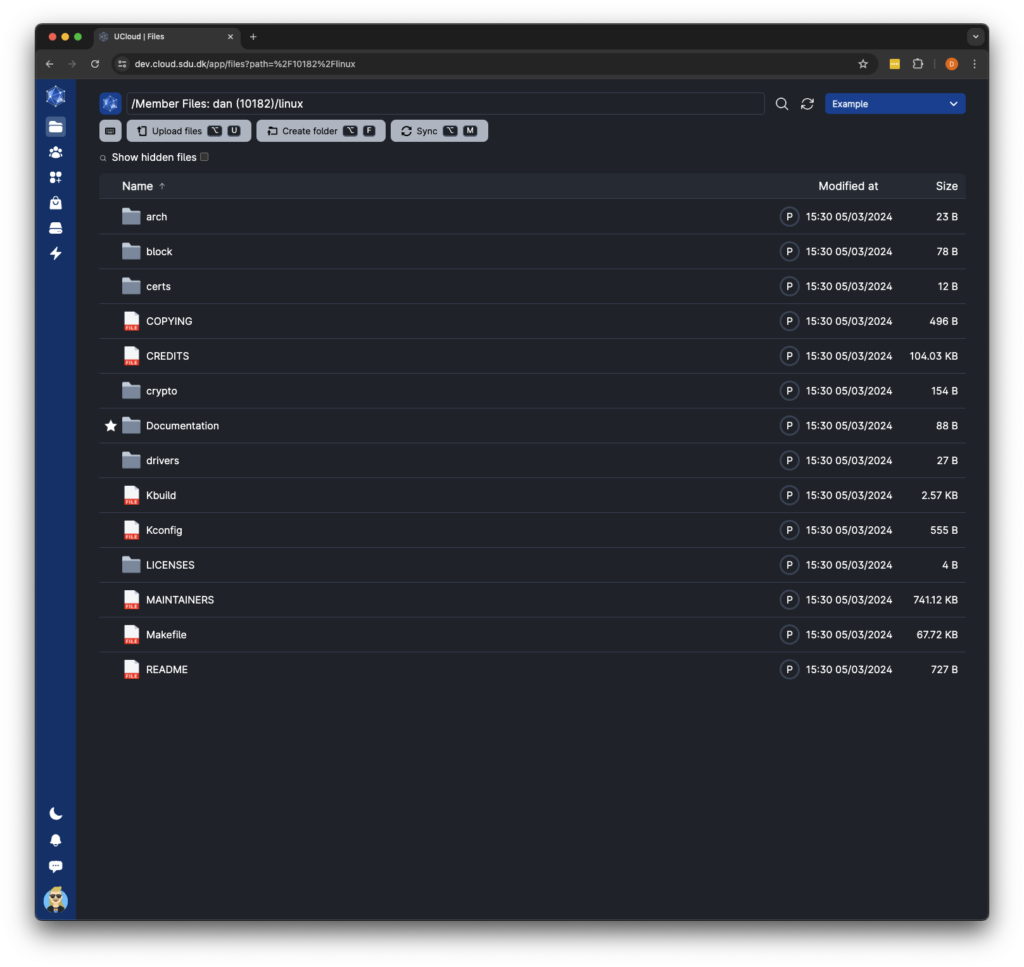

- File management now includes drag-select, drag-and-drop, and copy-paste for quicker access, along with a location bar for easy navigation.

- New two-level sidebar navigation replaces the top navigation bar, making it easier to find and access sub-pages within specific categories.

- Streamlined resource allocations integrate sub-projects, simplifying creation and management. The interface has been redesigned for improved organization and efficiency.

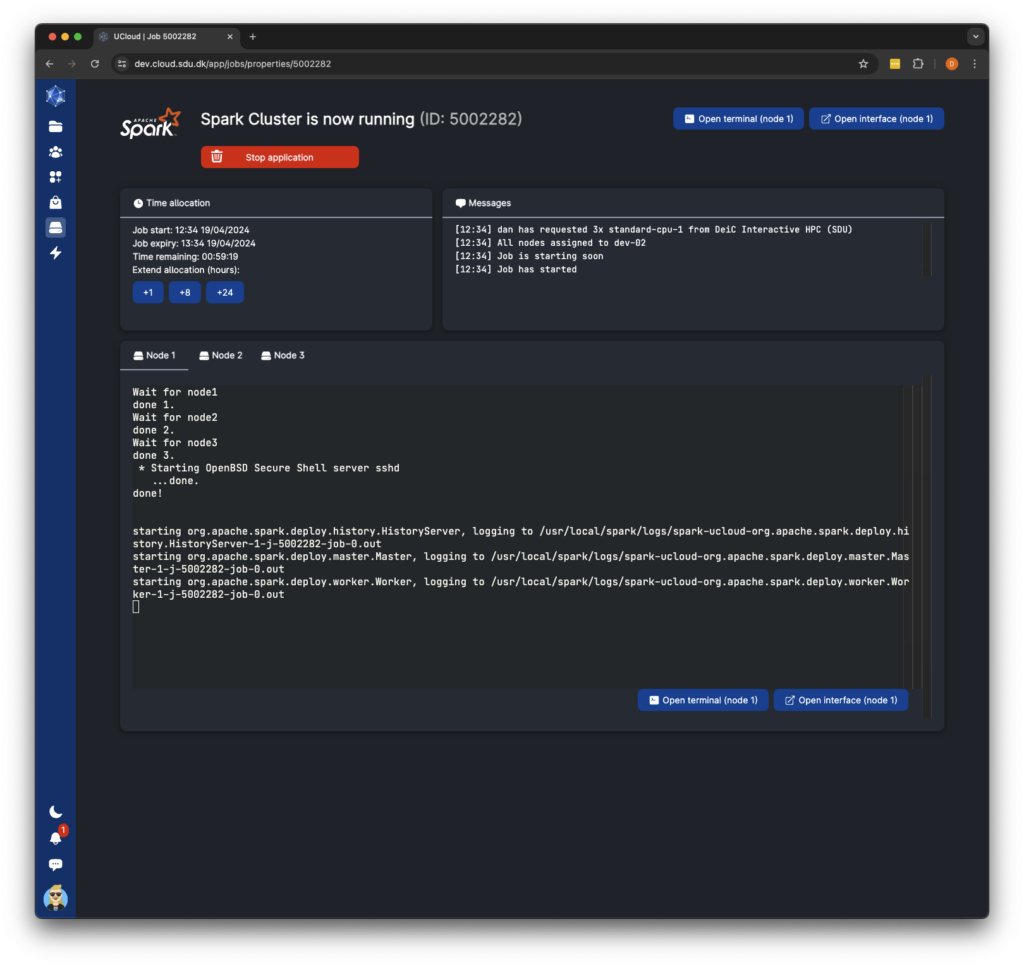

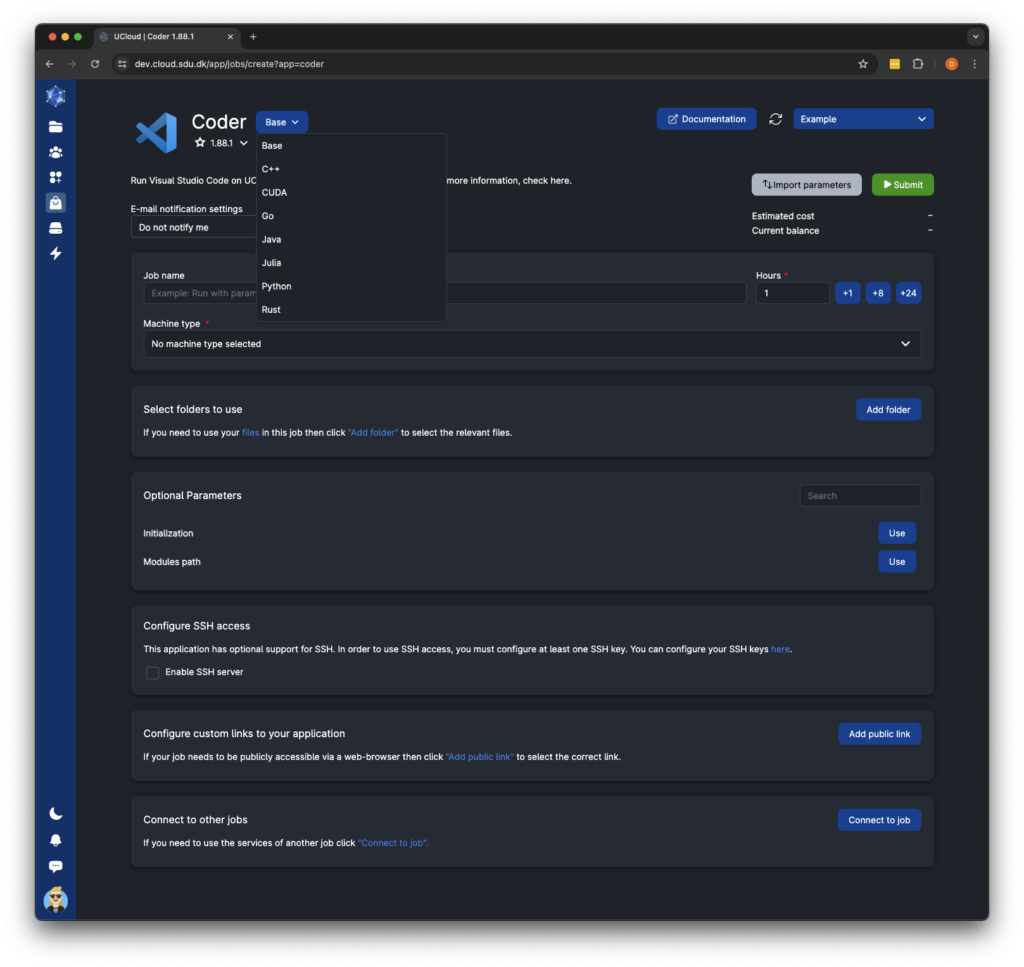

- Job submission enhancements allow users to switch between different app flavors and receive notifications for job status changes.

The updated interface reflects extensive research and meticulous examination of every aspect of the user interface, with the goal of addressing common pain points and improving both the overall layout and user experience. Designed with a focus on simplicity, efficiency, and consistency, the new interface aims to empower users while maintaining the core workflow on UCloud. This ensures that researchers can seamlessly manage their data and run applications as they normally would.

All these enhancements mark a significant step forward in optimising the digital infrastructure and is available by May 14th 2024. For further details about the new user-interface, changes to accounting and a brand-new application catalog, visit UCloud.