Timestamps:

00:00 – Introduction and welcome

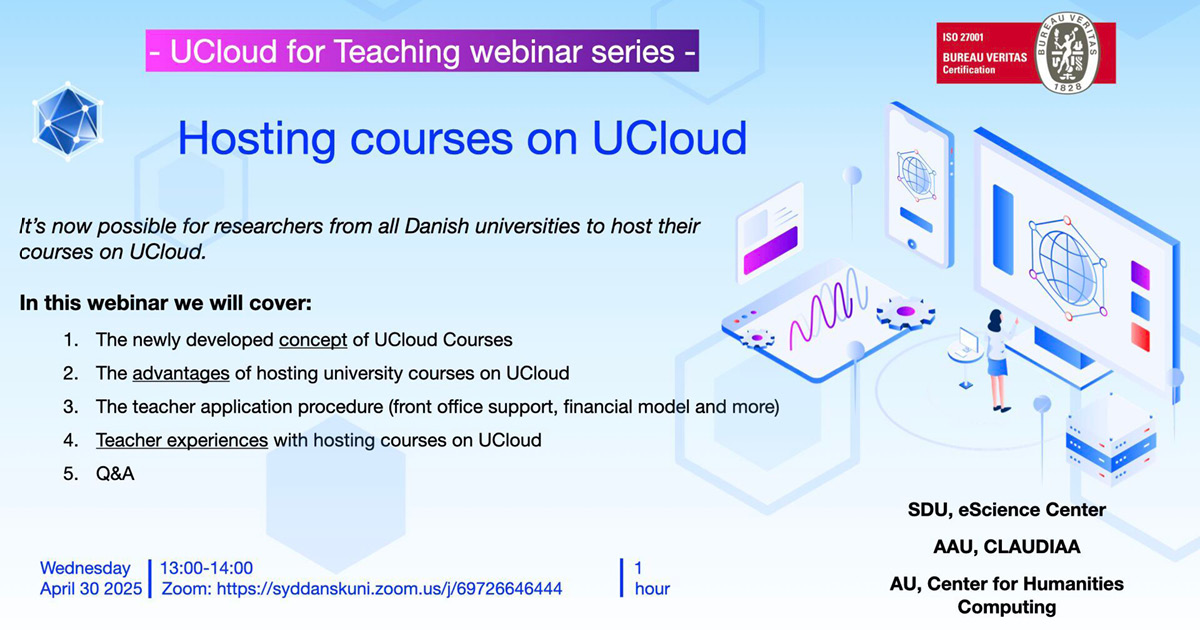

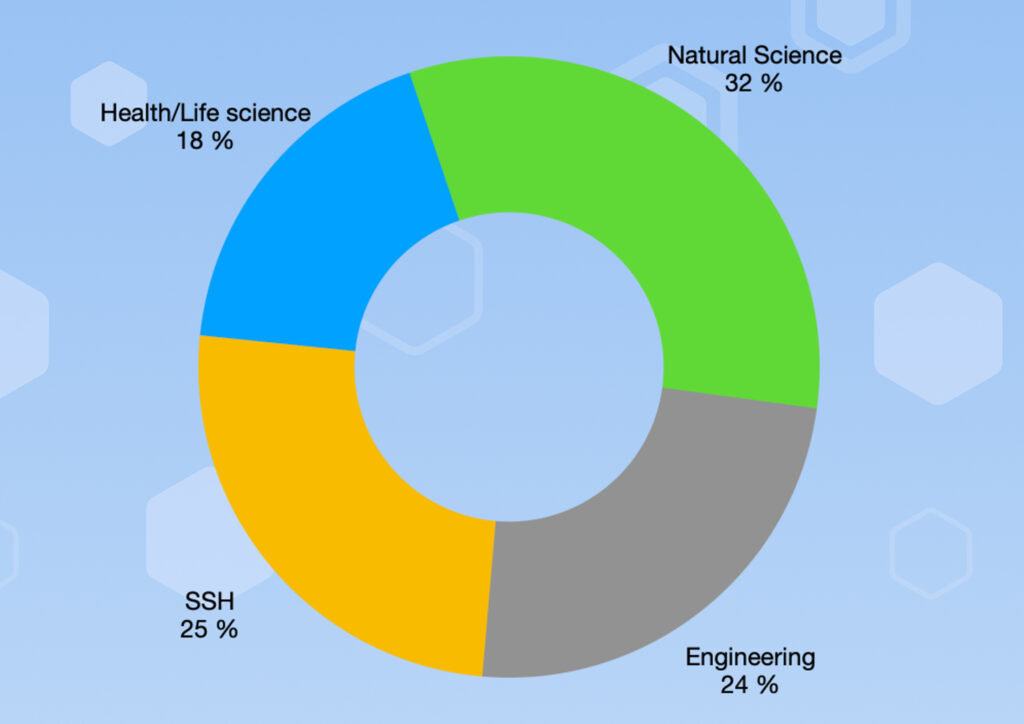

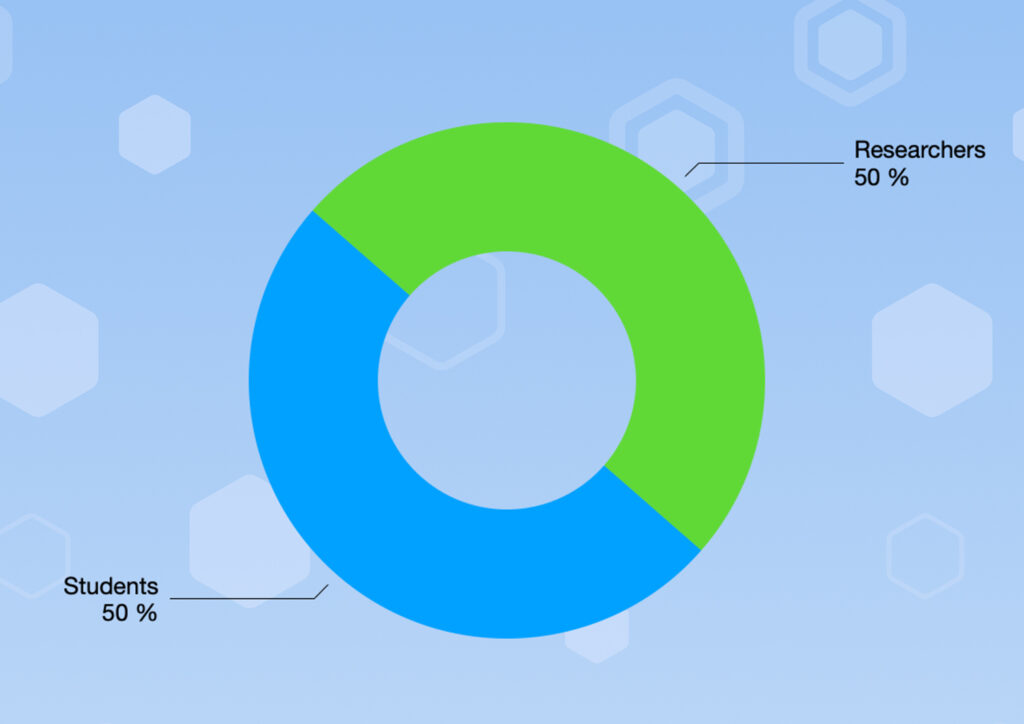

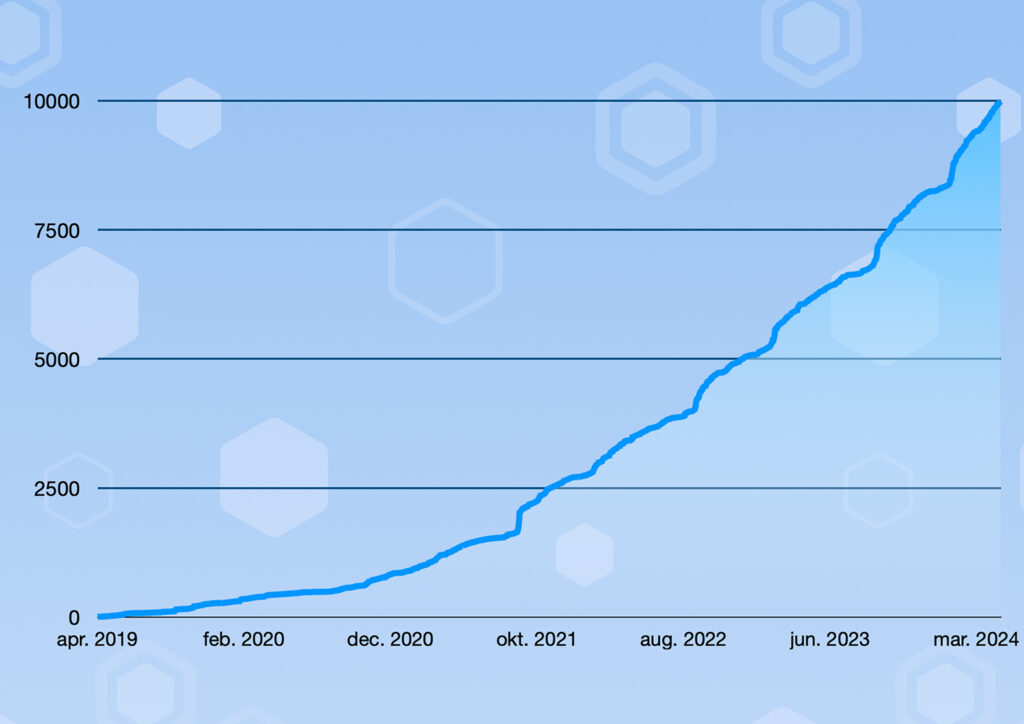

00:50 – Introduction to UCloud

05:27 – DeiC Interactive HPC website

06:07 – UCloud: Log in

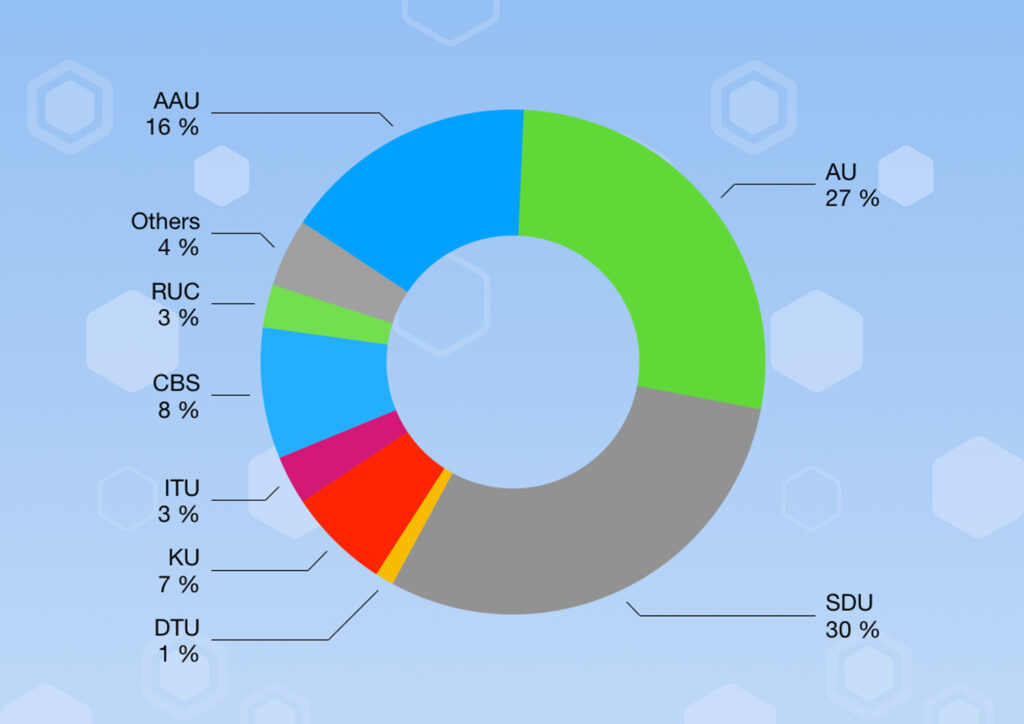

07:06 – UCloud: HPC providers on the UCloud platform

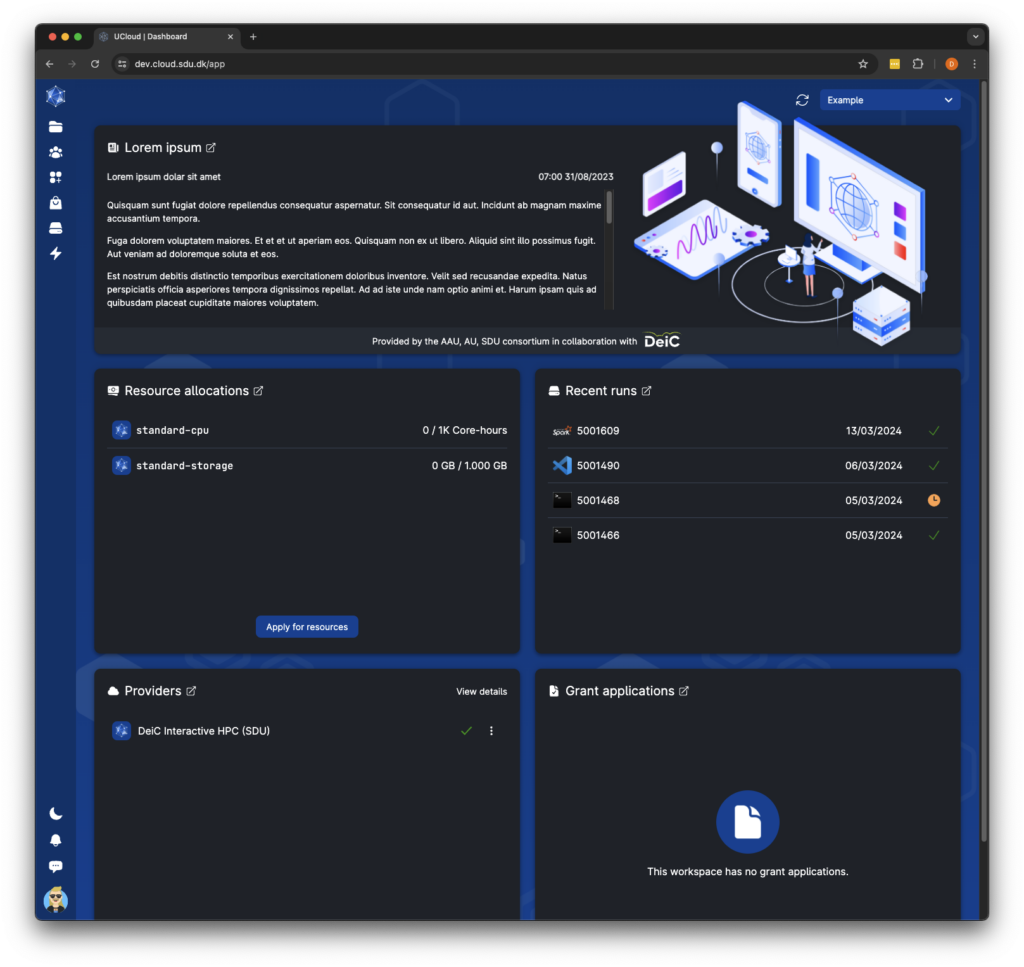

11:08 – UCloud: Initial resource allocations in “My workspace”

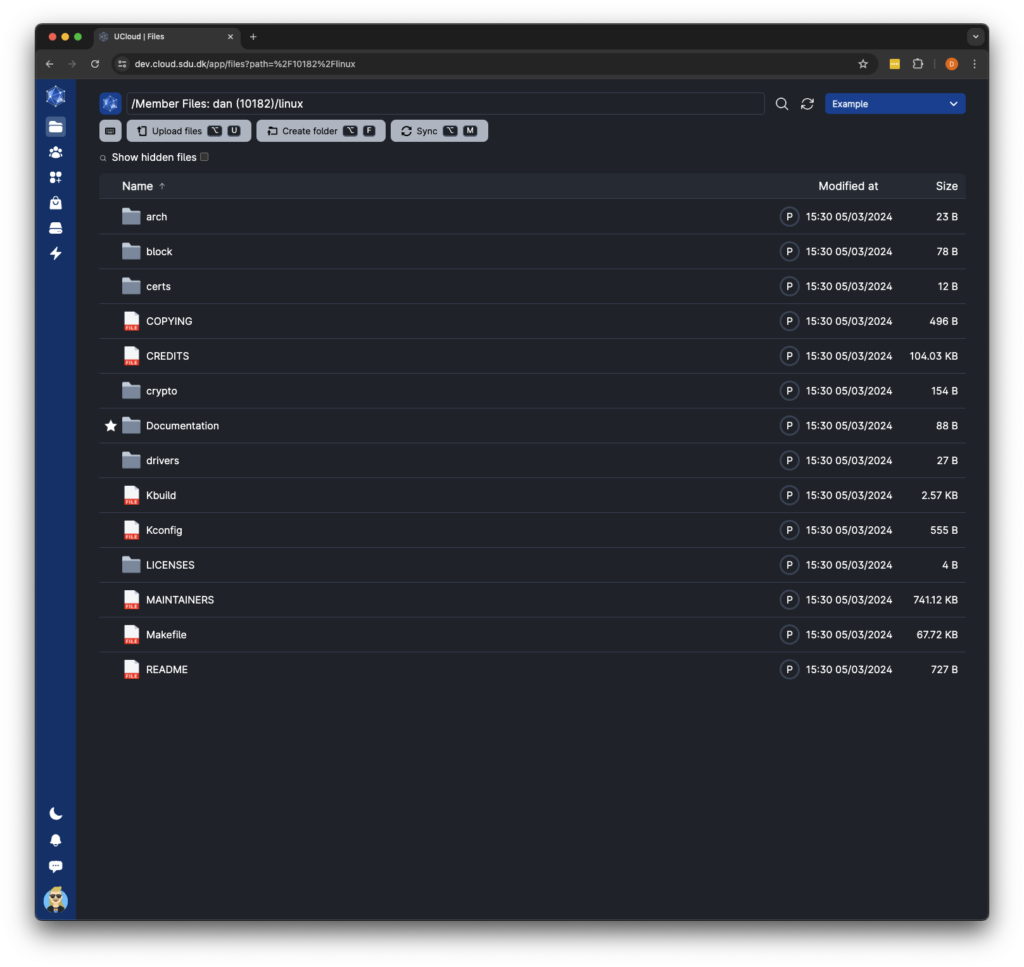

12:27 – UCloud: Storage in “My workspace”

13:28 – UCloud: Resources and applications for new resource allocations

13:50 – UCloud: Completing the resource application

21:05 – UCloud: Resource pools and limits (what does it cost?)

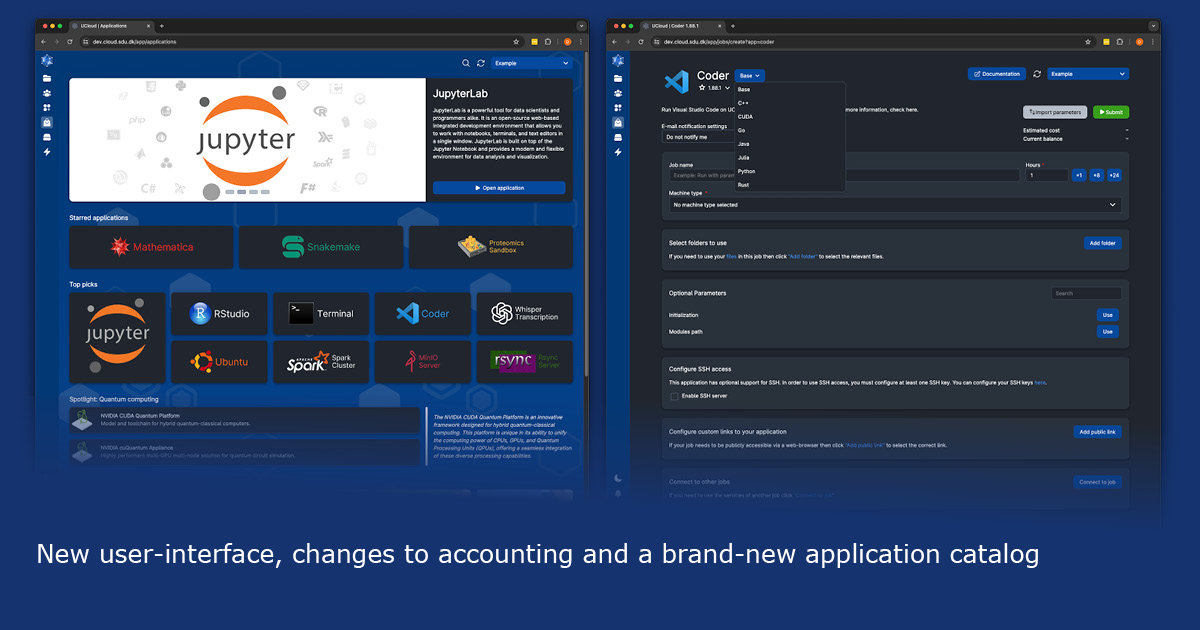

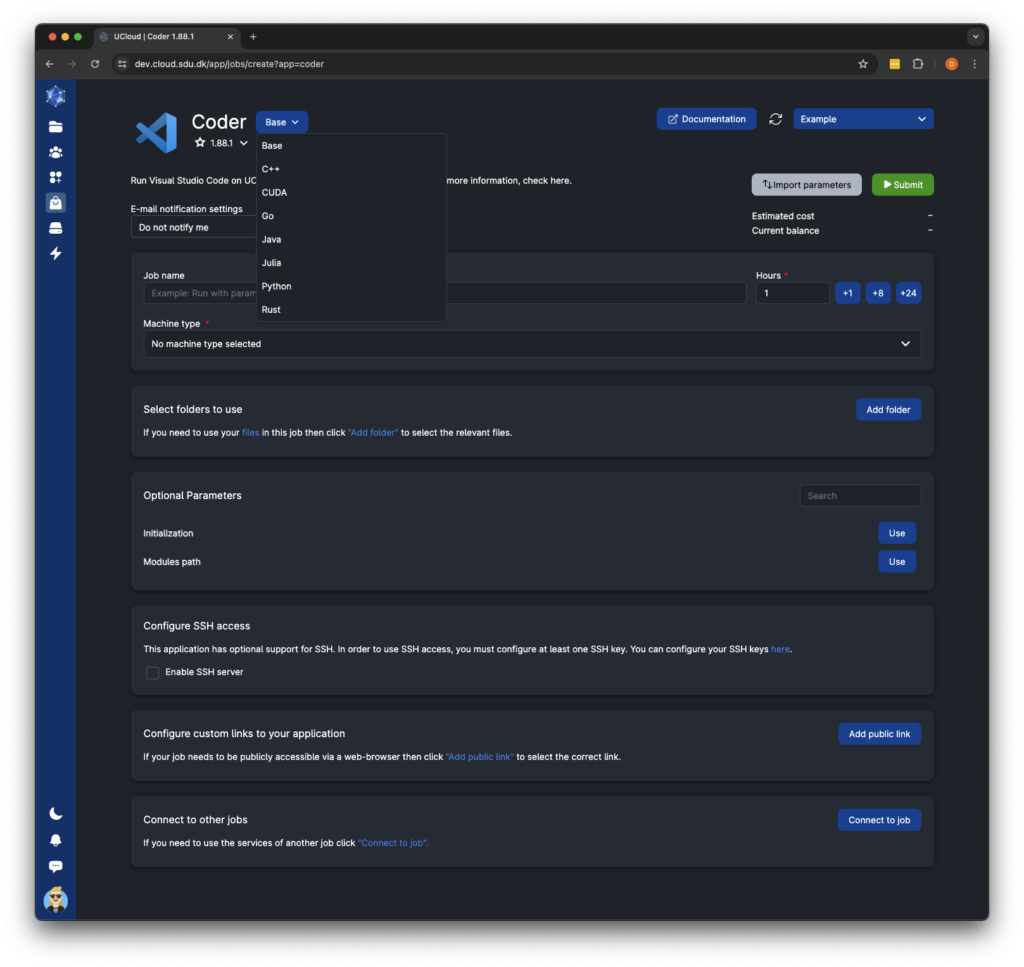

23:05 – UCloud: Apps, the app store, applications index in UCloud docs

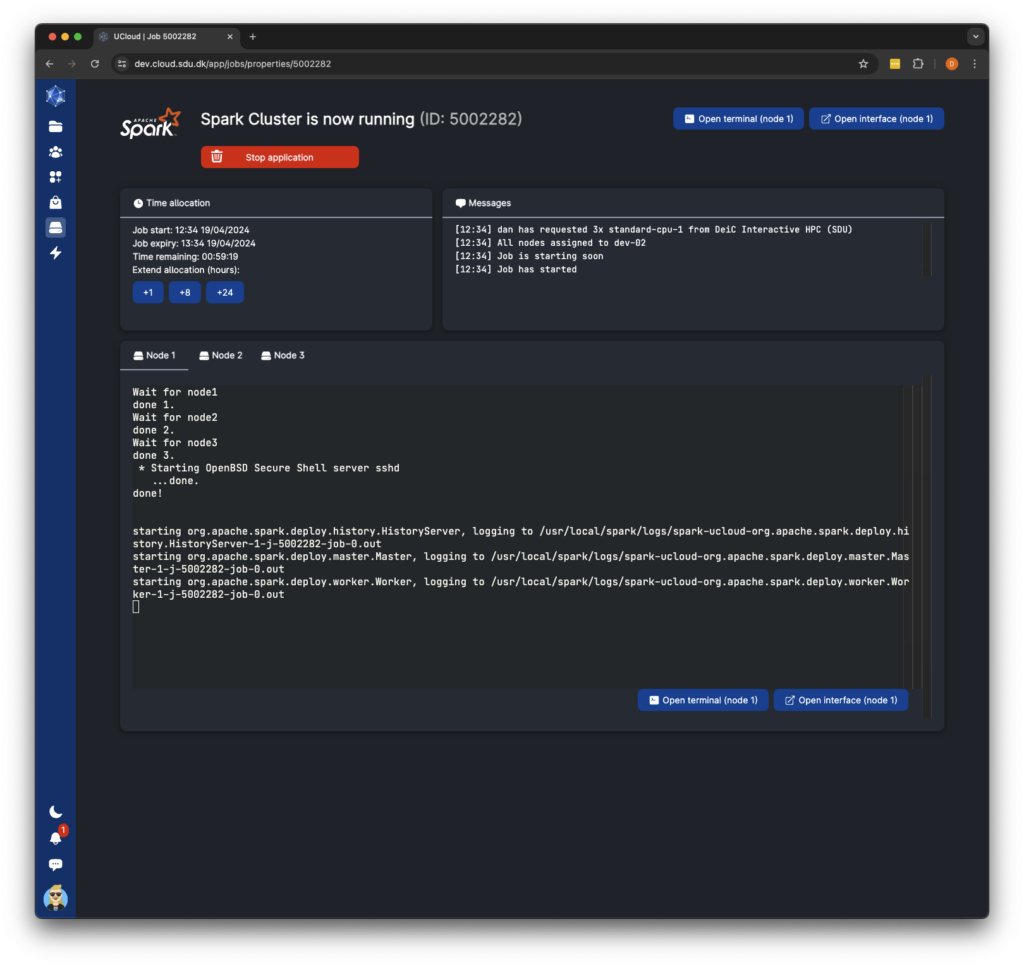

26:00 – UCloud: Advanced use cases and integration patterns

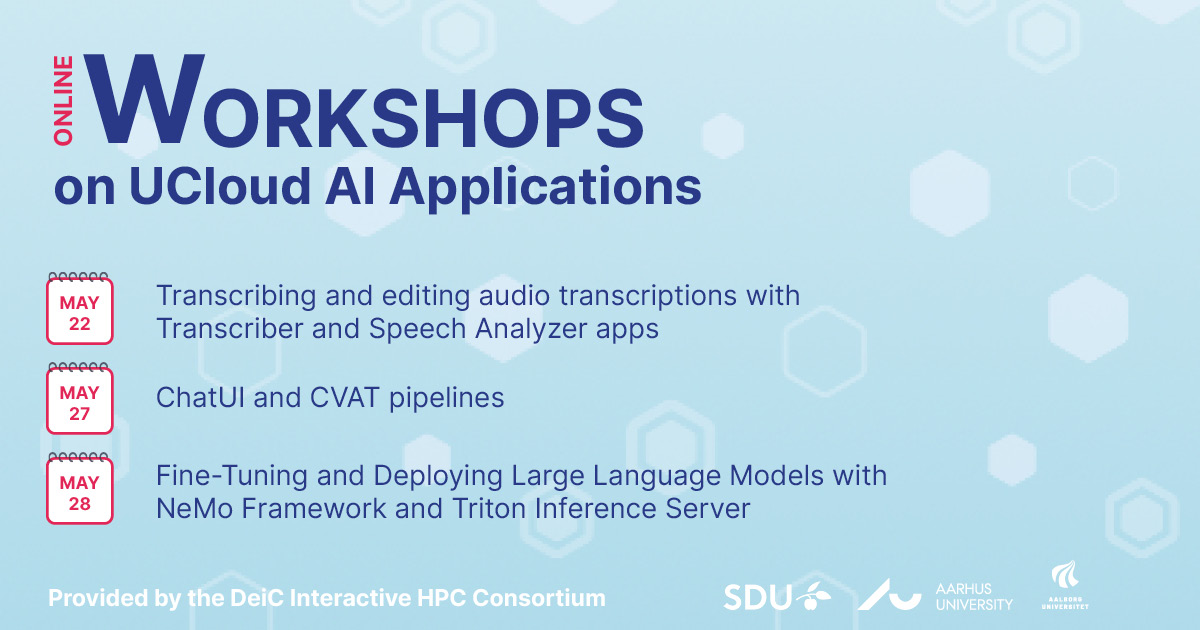

27:45 – UCloud: Transcriber: Intro and resource needs

30:08 – UCloud: Transcriber: Uploading files inside a project

31:20 – UCloud: Transcriber: Finding and launching Transcriber

32:00 – UCloud: Transcriber: Run Transcriber for the first time (Completing the app launch screen)

34:28 – UCloud: Transcriber: Running multiple Transcriber jobs simultaneously

35:00 – UCloud: Transcriber: Import previous Transcriber job parameters

35:35 – UCloud: Transcriber: Opening running jobs from the “Recent runs” pane

37:15 – UCloud: Transcriber: Transcriber output directories (Jobs folder)

38:26 – UCloud: Transcriber: Transcriber outputs in “Recent runs”

39:54 – UCloud: Transcriber: Output inspection and data download of zip file

41:36 – UCloud: Chat UI: Introduction

43:27 – UCloud: Chat UI: Run Chat UI for the first time (Completing the app launch screen)

48:40 – UCloud: Chat UI: First look at the Chat UI interface (disable new sign-ups and download a model)

52:36 – UCloud: Chat UI: Including documents to support Retrieval Augmented Generation (RAG) (i.e. supplementing the model with an additional document)

57:18 – UCloud: Chat UI: Extend the job time on any UCloud job (if needed)

58:28 – UCloud: Chat UI: Text-to-image generation (stable diffusion with a standard LLM model)

1:01:45 – UCloud: Chat UI: RAG for (best guess) document summarization (beware of model hallucinations)

1:04:45 – UCloud: Label Studio: Introduction

1:05:15 – UCloud: Label Studio: Run Label Studio for the first time (Completing the app launch screen)

1:07:55 – UCloud: Label Studio: First look at the Label Studio interface

1:09:40 – UCloud: Label Studio: Brief view of the Label Studio documentation

1:10:52 – UCloud: Label Studio: Introduction to the coming “Speech Analyser” application

1:15:45 – UCloud: Label Studio: Documentation

1:16:08 – Conclusion